AI insights

-

What is the main takeaway of "In the Age of AI, Human Capability Is the Real Advantage"?

The article argues that being "AI-first" often becomes being tool-first, which can make work thinner, colder, and less centered on the user. Its core claim is that tools can increase output, but products are ultimately shaped by people using judgment to serve human needs.

Topic focus: Core Claim -

What mistake does the article say teams are making with AI?

It says many teams have made a "quiet and costly mistake": designing for the machine instead of the human trying to get something done. The result is product drift, where efficiency rises but the user disappears from the conversation.

Topic focus: Pitfall -

What does the article mean by human capability, and why is it the real advantage?

The article frames human capability as the judgment that actually ships products and keeps them useful for people, not just optimized for tools. This aligns with the related leadership piece, which warns that fluent AI output can look complete even when evidence is thin, making human discernment the real safeguard.

-

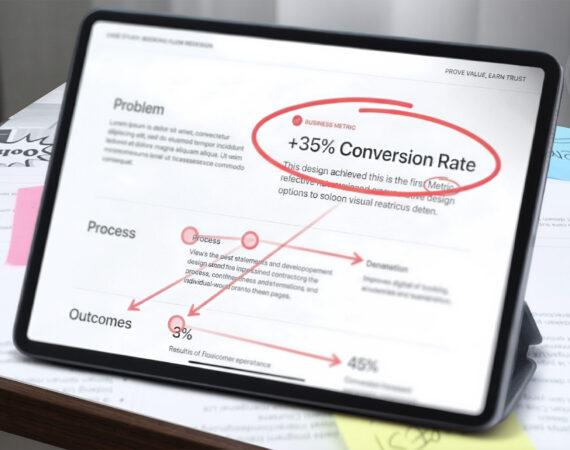

Is there a concrete metric or data point mentioned in the related articles that helps make this practical?

Yes: the leadership article recommends a 15–20 minute "structured clarity" ritual as "speed insurance" to prevent confident but weak decisions. Paired with the primary article's warning about tool-first thinking, that gives readers a concrete time-box for re-centering judgment before shipping or approving work.

Topic focus: Data Point -

How can teams apply the article's advice in practice?

A practical approach is to treat AI as a tool for faster output, then deliberately check whether the work still reflects the human problem to be solved. The agency article extends this into a workflow shift: move from task execution to strategic orchestration that blends AI efficiency with human-centered insight and ethics.

Topic focus: How To -

What misconception should readers avoid when adopting AI-heavy workflows?

A key misconception is assuming polished AI output equals sound thinking. The primary article says tools do not ship products on their own, and the systems article reinforces that point by arguing that patterns are cheap now, while judgment, critical thinking, research, communication, and empathy remain the hard part.

Topic focus: Pitfall -

What should I read next if I want to go deeper on this topic?

A strong next read is "Leadership in the Age of Infinite Fluency" if you want a decision-making practice for testing AI-generated fluency before it hardens into bad strategy. If you're thinking about organizational value, "Redefining Agency Value in the Age of AI" is the closest follow-up because it focuses on strategic orchestration, capability building, and blending efficiency with ethics.

- The article argues that many teams calling themselves AI-first are really tool-first, which can make products faster but colder, less trustworthy, and less useful to real users.

- AI can speed production, but it cannot replace judgment, empathy, clear thinking, or the human research and testing that good design depends on.

- The author says weak briefs, bad assumptions, and shallow understanding get amplified by AI, creating polished output that looks right before it is proven useful.

- Design fundamentals like accessibility, governance, synthesis, and usability testing matter more with AI because faster delivery can also accelerate errors, rework, and legal risk.

- Strong teams start with human needs, context, trust, and constraints, then use AI as a tool rather than letting it drive decisions.

- The core claim is that real advantage comes from building human capability, because better tools only help when the people using them think and decide well.

Everyone wants to be AI-first right now.

I hear it in every meeting, every roadmap, every pitch deck. AI workflows. AI automation. AI agents. Faster output. Fewer bottlenecks. More scale.

Fine. Use the tools—but don’t let them use you.

A lot of teams say they are AI-first. What they usually mean is tool-first. That sounds modern until the work gets thinner, the product gets colder, and the user disappears from the conversation.

Tools don’t ship products. People with judgment do.

Somewhere in the noise, many teams have made a quiet and costly mistake: they started designing for the machine instead of the human being trying to get something done.

That is where products begin to drift. They get faster, but colder. Smarter, but harder to trust. More efficient, but less useful.

At UX Design Lab and through TCE (Team Capability Engine), we see the same pattern again and again: the teams that benefit most from AI are not the ones talking about it the most. They are the ones developing humans—people who can think clearly, understand context, make sound decisions, and stay rooted in the first principles of design.

In the age of AI, that is still the real advantage.

The Real Product Is Human Capability

AI can accelerate production, but it cannot replace judgment. If a team lacks clarity, empathy, alignment, or critical thinking, AI will not solve that problem. It will amplify it.

For a while, many teams believed the next leap would come from replacing messy human work with cleaner machine output. Fewer interviews. Less synthesis. Faster concepts. More generated options. On paper, it looked efficient.

In practice, it often exposed the same old problems wearing new clothes.

Weak briefs stayed weak. Bad assumptions moved faster. Teams confused polished language for strong thinking. The user disappeared under the weight of internal excitement.

The real danger of AI is not that it replaces designers. It is that it tempts teams to abandon the hard human work that made good design possible in the first place. That shortcut looks efficient right up until trust breaks.

We felt that tension ourselves. For a stretch, we pushed too hard toward AI-driven efficiency. We were generating concepts faster than we were understanding the people they were meant to serve. The speed was real. The quantity was impressive. But something essential started slipping. The work had movement without depth. It sounded right before it was proven right. It looked sharp before it was truly useful.

We moved faster with AI, but for a while, we also understood less. That was the warning sign.

The breakthrough came when we stopped chasing output and returned to the human truths good design has always depended on: listening before deciding, understanding before scaling, and testing before declaring success.

That experience clarified something simple: AI is not the product. The team is the product behind the product. The better the tools become, the more obvious it gets that the real product has always been the quality of the people behind it.

We built TCE to make that quality measurable. It shifts the focus away from counting output and toward verifying judgment, decision quality, operating discipline, and the skills that keep AI speed from turning into institutionalized waste.

AI is not an advantage. Human capability is.

Design Fundamentals Are Risk Management

In 2025, WebAIM found detectable WCAG failures on 94.8% of the top one million home pages, with an average of 51 errors per page. UsableNet tracked more than 4,000 digital accessibility lawsuits in 2024, including 961 repeat lawsuits against companies that had already been sued once. WCAG 2.2 added nine new success criteria. The message is no longer subtle: if AI accelerates interface production without human governance, it can accelerate noncompliance, rework, and legal exposure just as efficiently.

There is a strange idea circulating through product and design teams that AI has somehow made design rigor optional, as if research, accessibility, information architecture, usability testing, and DesignOps governance belonged to a slower era. In reality, the opposite is true. The more powerful the tool, the more expensive it becomes to skip the disciplines that keep decisions grounded, interfaces usable, and delivery accountable.

We have already seen the pattern in miniature. A flow can look polished in a demo, read well in a generated spec, and still fail where it matters most: keyboard navigation breaks, focus order becomes unpredictable, error messaging lacks recovery guidance, and the experience collapses the moment a real user tries to move through it without a mouse. None of that is a model problem. It is a human oversight problem. The tool produced something plausible; the team failed to apply the judgment, standards, and review gates that separate plausible from deployable.

That is why I do not see accessibility, governance, and synthesis as downstream checks. They are part of the design system of thinking itself. Mature teams do not begin with the model and ask how quickly they can ship what it generates. They begin with the human being, the operating context, the risks, and the constraints. They ask what the user is trying to accomplish, where trust may break, what must remain visible and explainable, and what standards the experience must meet before anyone calls it done.

This is where DesignOps becomes decisive. When teams insert explicit human synthesis into the workflow, reviewing intent, validating assumptions, checking accessibility, and confirming decision quality before scale, they do more than improve design quality. They reduce churn, prevent expensive rework, and keep AI from turning speed into institutionalized error.

These first principles are not slowing teams down. They are what keep velocity from becoming waste.

New Tools, Constant Human Needs

No matter how advanced the system becomes, people still need clarity, confidence, control, and care.

AI changed the speed of work. It did not change human nature.

Users still hesitate. They still doubt what they do not understand. They still carry stress, habits, fears, and imperfect attention into every interaction. They still need clarity, trust, and care. And no machine can fake its way past that for long.

That is why design remains rooted in empathy. Not because empathy sounds nice in a keynote, but because it is how you understand the reality on the other side of the interface.

A human-oriented process does not reject AI. It puts AI in its place.

Use it to accelerate exploration. Use it to reduce repetitive work. Use it to widen possibility. But do not let it replace observation, discernment, accountability, or human connection.

The future will not belong to the teams that automate the most. It will belong to the teams that know what must never be automated away.

That is the difference.

At UX Design Lab, we do not treat AI as a substitute for design thinking. We treat it as a tool inside a human-centered practice. We return to the scaffolding of good UX because it is what holds up under pressure. It is what helps products earn trust. It is what helps teams make better decisions, not just faster ones.

That is also the idea behind TCE. In the age of AI, the real scaling challenge is not simply generating more output. It is developing teams that can think, collaborate, govern, and decide well under pressure.

Not dependency. Not theater. Not noise.

Teams that can synthesize. Teams that can challenge assumptions. Teams that can design with empathy and operate with judgment in a world full of increasingly powerful tools.

That is still the work. And now it matters even more.

“The real problem is not whether machines think but whether men do.”

— B. F. Skinner

Takeaways

- AI can increase speed, but human capability still determines quality.

- Design fundamentals matter more now because AI amplifies weak thinking as easily as strong thinking.

- The teams that win will stay human-centered even while using powerful tools.

If your team is scaling speed but sacrificing judgment, let’s talk about how to embed human governance back into your delivery pipeline.