AI insights

-

What is the main takeaway of "The Baloney Detection Kit: Scaling AI Governance Through DesignOps"?

The article argues that enterprise teams should not let a strong vendor demo or polished prototype substitute for proof. Its core test is whether an AI system can survive real workflows with messy inputs, compliance constraints, cost pressure, and indifferent users.

Topic focus: Core Claim -

What does "baloney detection" mean in this article?

The phrase comes from Carl Sagan’s idea of testing claims instead of trusting authority, wishful thinking, or performance. In this article, it means evaluating AI systems on evidence from actual operating conditions rather than being persuaded by how elegant a demo looked.

-

What problem is the article trying to prevent in enterprise AI adoption?

It warns against the "demo trap": leadership sees a sharp prototype, starts talking about acceleration or transformation, and moves ahead before validating the system in production-like conditions. This closely matches the risk described in "Leadership in the Age of Infinite Fluency," where fluent output can win support before assumptions are tested.

Topic focus: Pitfall -

How can teams practically apply the article’s advice when evaluating AI tools?

Use a simple gate before rollout: ask whether the system will hold up under messy inputs, compliance requirements, cost pressure, and real user behavior. That practical discipline aligns with "Leadership in the Age of Infinite Fluency," which recommends 15–20 minutes of structured clarity as "speed insurance" before a confident mistake spreads.

Topic focus: How To -

What misconception should readers avoid when reading this piece?

Readers should avoid equating polished output with reliable performance. The primary article warns that elegance in a Tuesday demo is not evidence, and "In the Age of AI, Human Capability Is the Real Advantage" adds that teams often become tool-first and start designing for the machine instead of the human trying to get something done.

Topic focus: Pitfall -

What concrete scenario does the article use to make its case?

It opens with a familiar enterprise story: a vendor demo lands well, a prototype looks sharp, leadership starts talking about efficiency and transformation, and the project advances too soon. The example is meant to show how easily performance can outrun evidence in AI decisions.

Topic focus: Example -

What should I read next if I want related guidance beyond this article?

A strong next read is "Leadership in the Age of Infinite Fluency," which focuses on preventing confident but weakly evidenced decisions. For a broader operating model, "The Spiral Climbs: Ideas Are Expensive, Systems Are Cheap" adds that systems are now central, while critical thinking, research, communication, and empathy remain the spine.

- The article says enterprise AI teams must judge systems by real workflow performance, not polished demos, vendor claims, or executive enthusiasm.

- It applies Carl Sagan’s “baloney detection kit” to AI with three rules: demand independent evidence, argue against your own idea, and require testability.

- DesignOps and ResearchOps should enable self-service skepticism with eval templates, red-team checklists, ground-truth datasets, trust studies, and release gates inside normal delivery workflows.

- Teams should prove claims with concrete measures like cycle time, lead time, cost per successful task, change failure rate, and Human-to-AI Correction Ratio.

- AI quality must be stress-tested for bias, privacy, accessibility, drift, and harmful edge cases, with prompt changes and model updates triggering regression testing.

- The core warning is that authority, sunk cost, and fast shipping can hide unreliable systems that create technical debt, compliance risk, and lost trust.

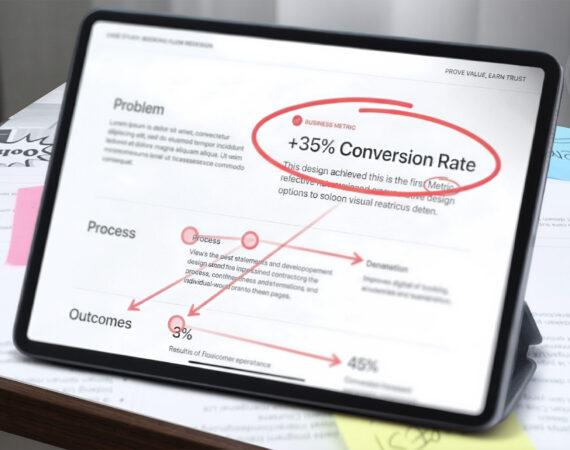

There is a familiar scene playing out in enterprise product teams right now. A vendor demo lands well. A prototype looks sharp. Leadership starts talking about acceleration, efficiency, maybe even transformation. Then the project moves forward before anyone has answered the only question that matters: will this system hold up inside a real workflow, with messy inputs, compliance constraints, cost pressure, and users who do not care how elegant the demo looked on Tuesday?

That is where Carl Sagan still earns his place.

In The Demon-Haunted World, Sagan described a “baloney detection kit,” a way of testing claims instead of falling for authority, wishful thinking, or performance. He wrote it for science and public life, but it fits AI product strategy unusually well. In enterprise settings, bad reasoning does not stay philosophical for long. It turns into sunk cost, technical debt, legal exposure, and product behavior that quietly breaks trust at scale.

Three parts of Sagan’s kit matter most here: demand independent confirmation, argue against your own position, and ask whether the idea can be tested. In enterprise AI, those principles become evidence standards, red-teaming, and evaluation gates. That is where the conversation has to move next.

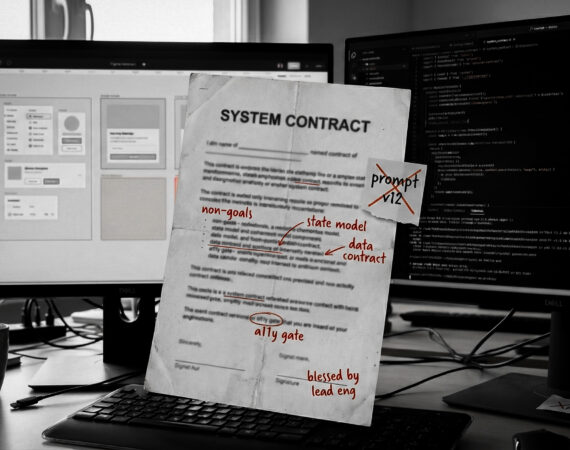

This is why the conversation cannot stop at product vision. In the time of AI, DesignOps and ResearchOps are not support functions sitting on the sidelines. They are the enablement layer that helps teams build evidence without creating a centralized approval bottleneck.

That distinction matters. At enterprise scale, DesignOps cannot become the baloney police for fifty product squads. It has to provide the tooling, standards, and operating rules that enable self-service skepticism: evaluation templates, prompt-testing suites, red-team checklists, model-change alerts, and delivery gates that teams can use within their own workflows. ResearchOps plays a parallel role by maintaining the ground-truth libraries, longitudinal trust studies, and human-in-the-loop orchestration that keep evaluation connected to actual user behavior rather than lab performance.

Just as important, this is cross-functional by design. Engineering usually owns the evaluation harness, observability, and automation pipeline. DesignOps should own the heuristics: the rubrics, quality definitions, and experience standards that define what “good” looks like before a test ever runs. Without that split, one team ends up measuring what is easy instead of what actually matters to users and the business.

Baloney vs. Evidence

| The Baloney Claim | The Evidence Required |

|---|---|

| “Our AI assistant accelerates task completion by 40%.” | Measurement of cycle time, lead time, Human-to-AI Correction Ratio (HACR), and change failure rate. |

| “The model is 98% accurate.” | A domain-specific eval set with an explicit risk-utility threshold tied to the blast radius of the use case. |

| “The UI is generated dynamically for every user.” | Automated WCAG 2.2 compliance gates, keyboard-navigation checks, screen reader validation, and accessibility regression testing. |

| “We’ve built a custom proprietary model.” | Benchmarking against commodity APIs to prove ROI after inference cost, maintenance, and model-induced drift. |

| “Our AI ensures data privacy and equity.” | Documented red-teaming logs for bias, a NIST-aligned risk assessment, and an audit trail for non-deterministic decisions. |

| “The vendor has enterprise AI covered.” | Audit of vendor eval datasets, SLA for drift notification, and performance proof against internal ground-truth benchmarks. |

Rule: Demand independent confirmation. Demand evidence, not enthusiasm

Why This Matters: AI systems sound convincing long before they are dependable.

Sagan’s first rule was to demand independent confirmation. In AI product work, that means a claim is not validated because a model provider published a benchmark, an executive liked a demo, or a team found five good examples in a workshop. It is validated when the system performs against the real job to be done.

That is where DesignOps has to get specific. “Evidence” in an AI program should mean versioned prompts, task-based evaluation sets, release criteria, and clear owners for quality. It should mean red lines for failure, not just applause for possibility. If a team says an assistant improves productivity, they should be able to show how that claim maps to actual delivery outcomes: cycle time, lead time, Human-to-AI Correction Ratio (HACR), change failure rate, latency, and cost per successful task. HACR is a simple operational ratio:

It measures how many materially wrong or incomplete AI outputs a user has to correct before work can move on.

Note: If HACR rises above 0.5, meaning people are correcting more than half of the outputs, that should be treated as a stop gate for production until the workflow is re-evaluated.

This is where DORA becomes useful. Bad AI can make lead time look better for a sprint or two because teams ship faster, while silently driving up change failure rate when hallucinated logic, brittle prompts, or broken generated UI reach production. High speed with a spiking CFR is not acceleration. It is expensive self-deception.

This is also the place for tangible governance. Prompt libraries need more than simple version control; they need semantic versioning. In practice, a system-prompt change is often a major version because it can alter behavior across the entire workflow and should trigger full regression against the ground-truth dataset. Smaller retrieval or formatting changes may be minor or patch versions, but they still need explicit traceability.

The real enterprise risk is prompt drift, and it often arrives with model-induced drift. A workflow that looked stable last month can degrade when the provider updates the underlying model, changes routing behavior, or silently adjusts safety layers underneath the same API surface. That is why prompt regression testing has to be a standard ritual, not an afterthought.

This is where DesignOps should act as an enablement function rather than a gatekeeper. It can publish self-service eval templates, automated prompt-testing suites, model-change alerts, and standard rubrics that squads run on their own before release. LLM-as-a-judge can help scale assessment, but it should not be treated as magic. It works best when paired with human spot checks and clearly defined rubrics. Otherwise, the team is just automating its own blind spots.

A strong demo proves that a model can perform. A strong workflow proves that the product can be trusted.

Rule: Argue against your own position. Stress-test the idea for failure, bias, and harm before the market does it for you

Why This Matters: The biggest AI failures rarely begin with weak ambition. They begin with a weak self-critique.

Sagan pushed people to argue against their own position. Most organizations say they want this, but few build it into the work. In AI programs, that omission is expensive.

A serious enterprise team should run structured red-teaming workshops before a capability gets normalized into the roadmap. Not theater. Not a token security review. A real attempt to break the experience from multiple angles: misleading answers, harmful edge cases, prompt injection, privacy leakage, inaccessible interactions, algorithmic bias, representational harms, bias against protected classes, over-trust, and role confusion between the human and the machine.

That red-team scope should explicitly include accessibility audit work. If the model generates a workflow that fails screen readers, breaks keyboard navigation, loses focus order, or creates low-contrast states, the experience is not innovative. It is noncompliant baloney in a regulated environment.

This is where one of Sagan’s sharpest warnings belongs: keep an open mind, but not so open that your brains fall out. AI strategy needs openness, but not the kind that confuses novelty with value or fluency with truth.

For product and UX leaders, this means requiring a premortem: if this feature fails six months from now, what probably caused it? For DesignOps, it means building the ritual into the operating model so dissent is not treated like negativity. The goal is not to kill ideas. It is to stop weak ideas from hiding inside executive enthusiasm.

Rule: Ask whether the idea can be tested. If it cannot be evaluated, it is not ready for enterprise scale

Why This Matters: AI quality is probabilistic, which means old testing habits are necessary but no longer sufficient.

Traditional UI testing is mostly deterministic. Does the button render? Does the flow submit? Does the permission state work? AI systems are different. The same input may produce different outputs, and the output may be fluent while still being wrong. That changes the job.

Baloney detection in AI requires a shift from “does the interface work?” to “is the system reliably useful for this use case, at this level of risk, under these operating conditions?” That is an evaluation problem, not just a QA problem.

The trap is pretending there is one universal quality bar. There is not. In a low-risk internal drafting tool, the team may accept a higher error rate because human review is built in and the blast radius is small. In a regulated workflow, the same rate may be unacceptable because the utility is lower than the exposure. What matters is the risk-utility tradeoff: how much value the system creates, how much human oversight remains, and how much harm a bad answer can cause when it slips through.

That is why ResearchOps matters here in a concrete way. Someone has to manage the golden datasets, ground-truth libraries, and longitudinal trust studies that keep evaluation anchored to reality. Someone has to track when users start over-trusting fluent output, where HACR and review burden are creeping up, and which edge cases are degrading confidence over time. That is not side work. It is part of the product’s evidence layer.

DesignOps then turns that evidence into a scalable operating practice. It should establish the rubrics, experience heuristics, accessibility criteria, and review checkpoints that define the quality bar. Engineering can then build the evaluation harness, observability, and automated testing around those definitions. That division of labor matters because the system should not only test whether outputs are stable; it should test whether they are useful, safe, and usable.

From there, DesignOps can define when a prompt change, retrieval change, or provider update triggers mandatory regression testing. It can make sure squads are not comparing outputs casually in Slack and calling that validation.

Most importantly, these checkpoints have to live inside the delivery pipeline. If AI evaluation, accessibility review, prompt regression, and risk review are not part of the Definition of Ready and Definition of Done, they will remain optional rituals. At scale, the honest move is to wire them into Jira or Azure DevOps gates so teams cannot move from pilot to production without proving the workflow still clears the bar.

Without that layer, “testability” stays vague, and vague quality standards are how unreliable systems reach production.

Rule: Do not rely on authority or sunk cost. Do not confuse investment with evidence

Why This Matters: In enterprise AI, the cost of baloney compounds fast.

A weak AI strategy does not just produce a weak feature. It often produces a wrapper product with no durable advantage, high inference costs, and a maintenance burden that grows every time the underlying model vendor updates capabilities. Three months later, the market catches up, the platform provider ships the same feature natively, and the internal team is left maintaining expensive differentiation that never really differentiated.

That is the real cost of baloney in enterprise environments. Compute spend. Talent burn. Integration debt. Security review. Change management. Procurement cycles. A roadmap bent around a capability that looked important before anyone proved it mattered.

This is also where procurement needs its own baloney detection step. Most enterprises are not building every AI workflow from scratch. They are buying black-box capability through SaaS vendors and integrations. If a vendor cannot provide a usable definition of good, explain how quality is evaluated, document model-change behavior, or let you test the feature against your own scenarios, they are asking you to buy belief instead of evidence.

This is also where the sunk cost fallacy does real damage. The most expensive baloney in the enterprise is often the model you built yourself that performs worse than a commodity API, but has become too big to fail politically. A company spends millions on a custom model or internal AI program. The results underperform a stronger generic API. Instead of reassessing, leadership doubles down because the political cost of admitting the miss feels worse than the financial cost of continuing it.

Sagan would have recognized this immediately. Authority is not proof. Prior investment is not proof. Organizational prestige is not proof. In AI governance, mature teams need a mechanism for saying: this initiative no longer clears the bar, and the honest move is to narrow it, reframe it, or stop it. The same standard should apply during vendor selection, renewal, and expansion reviews.

That is not failure. That is evidence doing its job.

The strategic advantage is not speed. It is disciplined disbelief.

Sagan’s kit was never about sounding smarter than other people. It was about becoming harder to fool, especially by yourself.

That is the right posture for AI product strategy now.

The teams that will do well are not the ones with the loudest AI narrative. They are the ones that build repeatable ways to challenge claims, measure outputs, govern prompts, regression-test workflows, pressure-test vendors, and decide when a model belongs in the workflow at all. In enterprise settings, that discipline is not bureaucracy. It is product quality, operational resilience, compliance readiness, and cost control wearing the same coat.

In a market that rewards motion, skepticism can look slow. Usually, it is the only thing keeping the organization from scaling nonsense.

“Extraordinary claims require extraordinary evidence.”

— Carl Sagan

Key takeaways

- DesignOps should scale skepticism through self-service standards, semantic prompt versioning, automated testing, and delivery gates, not through centralized approvals.

- ResearchOps keeps AI evaluation honest by managing ground-truth datasets, longitudinal trust signals, and human-in-the-loop review patterns.

- Enterprise AI requires probabilistic evaluation, accessibility audits, vendor scrutiny, and a risk-utility model tied to the blast radius of the use case.

References

- DORA metrics overview (Google Cloud)

- 2024 Accelerate State of DevOps / DORA report announcement (Google Cloud)

- Announcing the 2025 DORA Report: State of AI-Assisted Software Development (Google Cloud Blog)

- 2025 DORA State of AI-Assisted Software Development report landing page (Google Cloud)

- Introducing DORA’s inaugural AI Capabilities Model (Google Cloud Blog)

- Web Content Accessibility Guidelines (WCAG) 2.2 (W3C)

- Understanding WCAG 2.2 (W3C WAI)

- NIST AI Risk Management Framework overview (NIST)

- NIST AI RMF Playbook (NIST)

- Regulation (EU) 2024/1689 – Artificial Intelligence Act (EUR-Lex)

- AI Act high-level summary with implementation materials

- OpenAI: How evals drive the next chapter in AI for businesses

- OpenAI API: Working with evals

- OpenAI API: Evaluation best practices

- OpenAI Cookbook: Detecting prompt regressions

- OpenAI Cookbook: Building resilient prompts using an evaluation flywheel

- Semantic Versioning 2.0.0

- Atlassian: Definition of Done

- Azure DevOps best practices for Agile product management